Best Practices for AI-Assisted Discovery

A comprehensive guide to getting the best results from Clarity First. From project setup to stakeholder presentation.

1. Setting Up for Success

The quality of your discovery insights depends heavily on how well you set up your project. Clarity First uses your project context to shape the interview questions and focus the analysis.

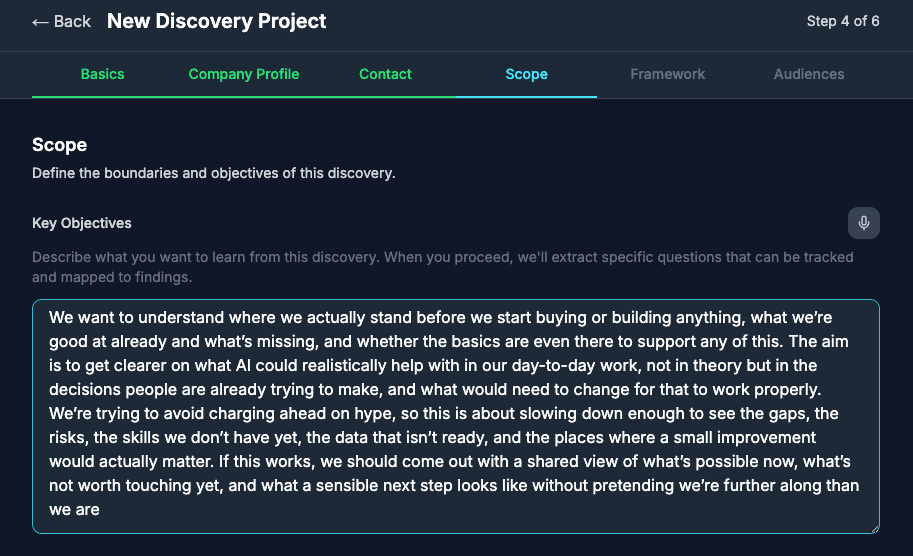

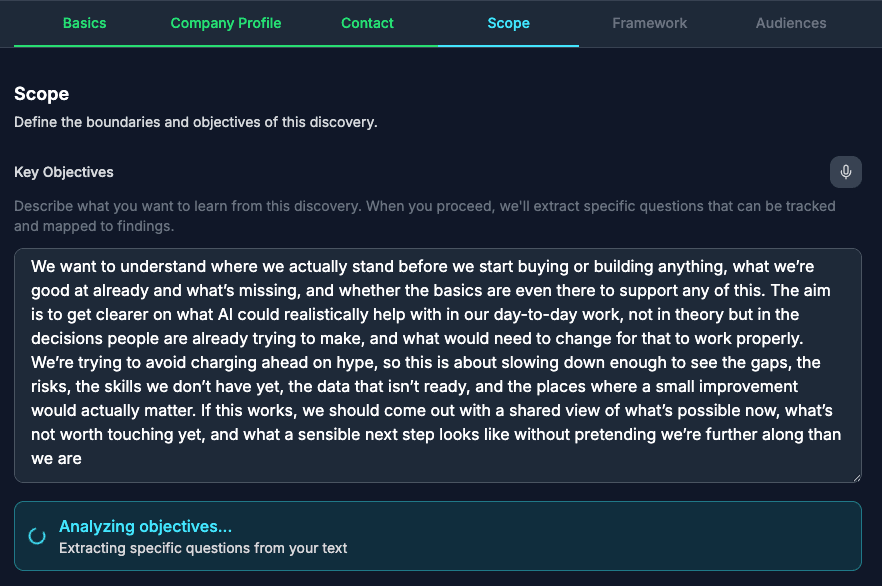

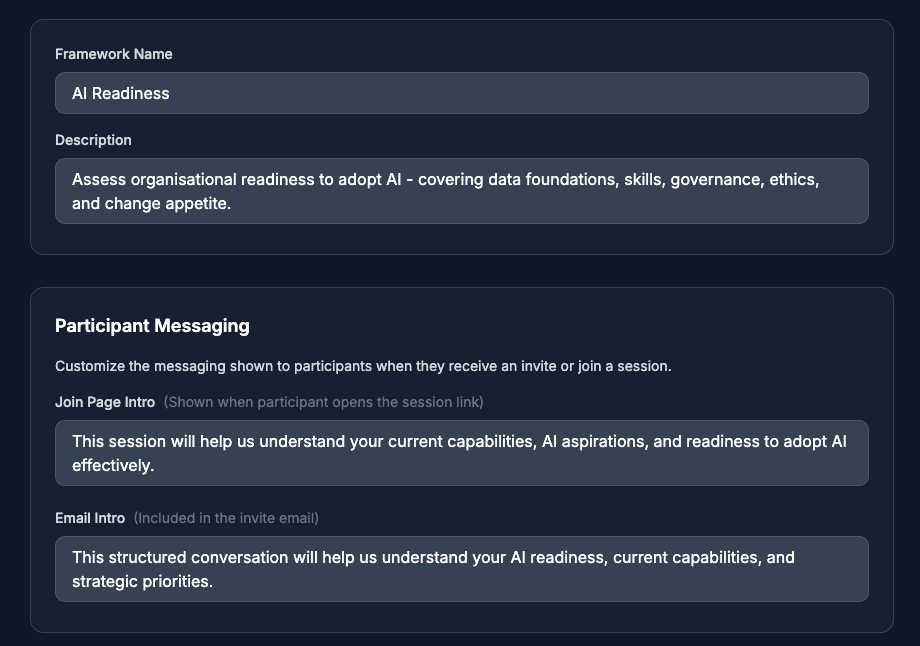

Writing Effective Project Objectives

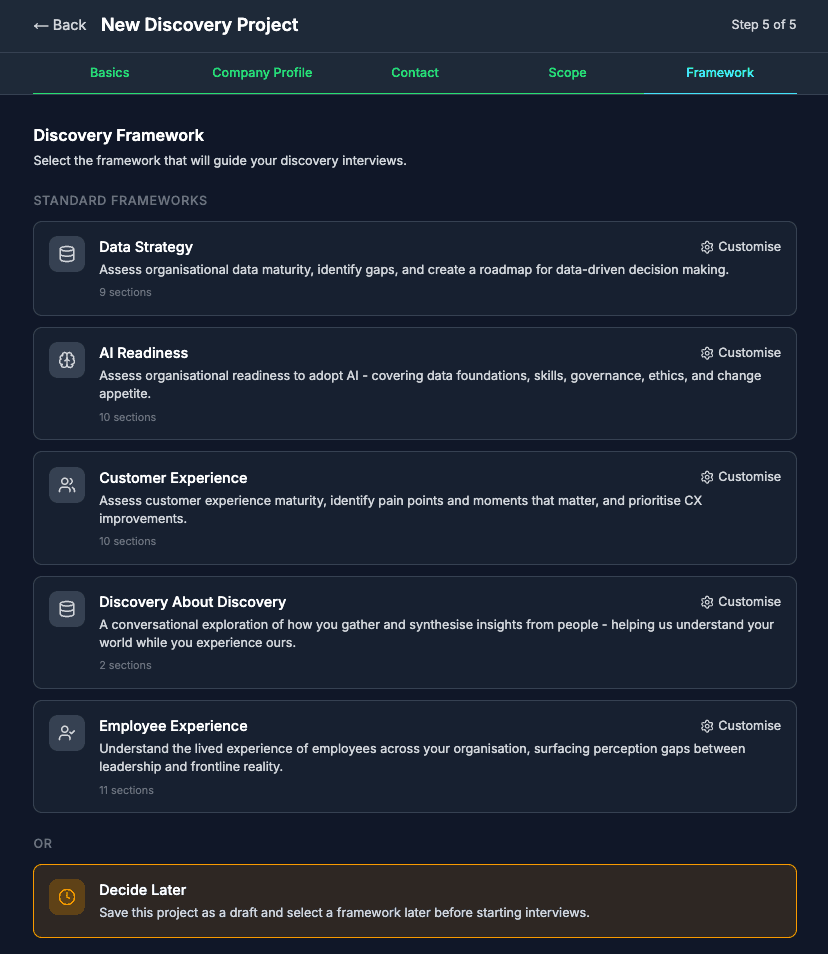

Your project objectives tell the AI what you're trying to learn. Our Bacon agent parses these objectives to create a synthesis brief that guides the entire analysis. The more specific you are, the more targeted your insights will be.

Too vague:

"Understand the current state and identify improvements"

Specific and actionable:

"Identify the top 3 barriers to data sharing between departments and assess appetite for a centralised data platform"

Defining Scope

Clear scope boundaries help participants stay focused and help the AI filter relevant insights. Define what's explicitly in scope and what's out of scope.

- In scope: Topics, departments, processes, or time periods you want to explore

- Out of scope: Areas to explicitly avoid (e.g., "Not covering the US subsidiary" or "Excluding budget discussions")

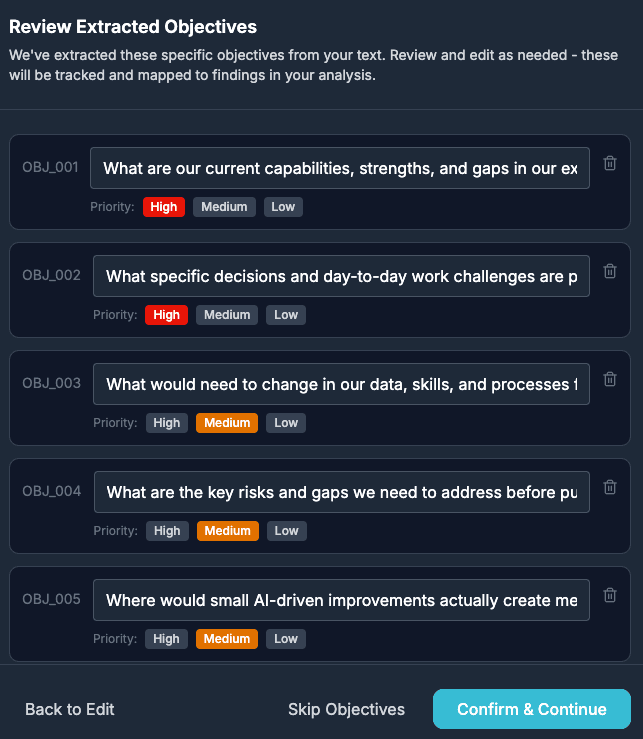

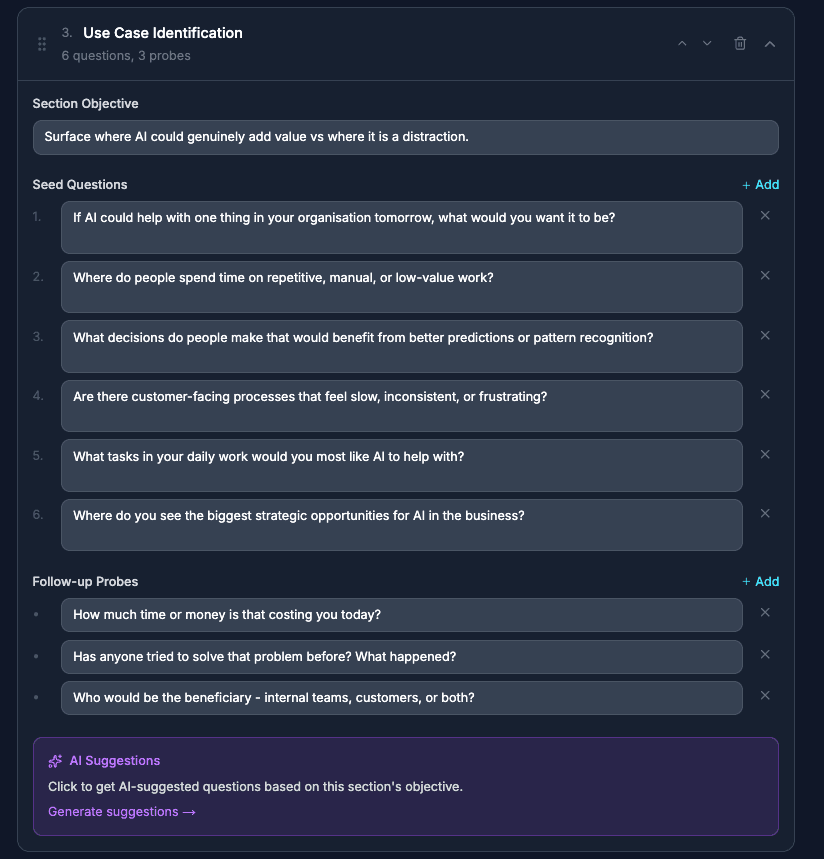

Choosing the Right Framework

Frameworks shape the interview structure and the topics covered. Choose based on your engagement type:

- Data Strategy: For data maturity assessments, analytics capabilities, data governance

- AI Readiness: For AI adoption assessments, use case identification, capability gaps

- Customer Experience: For journey mapping, pain points, service improvement

- Digital Transformation: For technology modernisation, process digitisation

- Custom: Build your own framework for specialist engagements

Client Context Matters

The background information you provide about the client shapes how the AI interprets responses. Include industry, company size, and the engagement trigger (why now?).

2. Preparing Participants

Participant preparation is the single biggest factor in interview quality. A well-briefed participant gives richer, more useful responses. An anxious or confused participant gives guarded, surface-level answers.

The Invitation

Your invitation email sets expectations. Be clear about:

- What it is: A voice conversation — either with our AI interviewer or with a consultant via Microsoft Teams

- Why them: Their perspective is valuable because...

- How long: Typically 20-30 minutes

- What topics: A brief overview (not the specific questions)

- Confidentiality: How their responses will be used and who sees them

Setting Expectations

Participants who know what to expect perform better. Key points to communicate:

- It's a conversation, not a test - there are no wrong answers

- They can speak naturally; the AI will follow up on interesting points

- They can ask for clarification if a question isn't clear

- They can skip any question they're not comfortable answering

- The interview is recorded and transcribed for analysis

Addressing Common Concerns

Anticipate and address these common participant worries:

"Will I sound stupid?"

The AI is trained to understand varied communication styles. There's no "right" way to answer. We're interested in your genuine perspective.

"Is this being recorded?"

Yes, the conversation is recorded and transcribed so it can be analysed alongside other interviews. The recording is not shared outside the project team.

"Who will see my responses?"

Your responses are combined with others to identify patterns. Findings are typically reported by role or department, not by name, unless you agree otherwise.

"What if I don't know the answer?"

That's fine - just say so. "I don't know" or "That's outside my area" are perfectly valid responses. The AI will move on to the next topic.

Tips for Different Stakeholder Types

Executives (time-poor)

Emphasise the 20-30 minute duration. Mention that the AI is efficient and won't waste their time with irrelevant questions. Offer flexible scheduling.

Frontline staff (detail-rich)

Encourage them to share specific examples and stories. Their day-to-day perspective is valuable. Reassure them that honest feedback is welcome.

Technical specialists

Let them know the AI can handle technical detail. They don't need to oversimplify. If they use acronyms or jargon, the AI will understand or ask for clarification.

Sceptics

Acknowledge that AI interviews are new. Emphasise that it's about efficiency - freeing up human consultant time for higher-value conversations and recommendations.

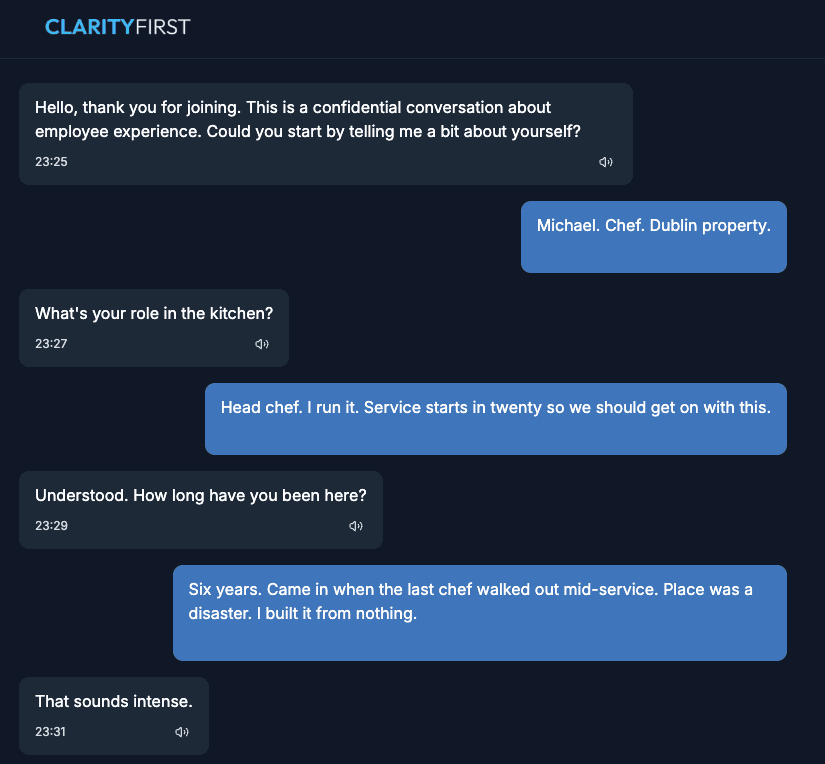

3. Three Interview Modes

Not every stakeholder is suited to the same interview approach. Clarity First supports three modes — AI-led, consultant-led via Teams, and transcript import — so you can match the method to the stakeholder. All three feed into the same analysis pipeline.

1. AI Interviews (Socrates)

The participant receives a link and has a voice or text conversation with Socrates, our AI interviewer. No login, no app install. The AI adapts, follows threads, and probes for depth. Ideal for scale — run 30 interviews in parallel.

2. Consultant Interviews via Teams

The participant schedules a Microsoft Teams call with your consultant. After the meeting, fetch the transcript with one click and it flows into the same analysis pipeline. Teams interviews do not consume credits.

3. Transcript Import

For interviews conducted outside the platform — phone calls, in-person meetings, or other tools — import the transcript directly. The same AI agents analyse it alongside your other interviews.

Availability

Consultant-led Teams interviews and transcript import are available on Pro and Expert plans. See pricing

When to Use Each Mode

Offering choice is a strength, not a compromise:

- AI interviews: Best for volume, consistency, and parallel execution

- Teams interviews: Best for VIPs, sensitive topics, relationship building, and accessibility needs

- Transcript import: Best for interviews already conducted elsewhere

Consistency Across All Modes

Regardless of how an interview is conducted, the same analysis process applies:

- Same framework and topic structure

- Same AI agents (Humboldt, Bayes, Darwin, etc.) process the content

- Same synthesis output and evidence linking

- No difference in the final deliverable

The Advocacy Angle

Hybrid positioning is a selling point: “We use AI to handle volume and consistency, while reserving consultant interviews for situations that need a personal touch.” This shows thoughtfulness and positions AI as a tool in your toolkit, not a replacement for judgment.

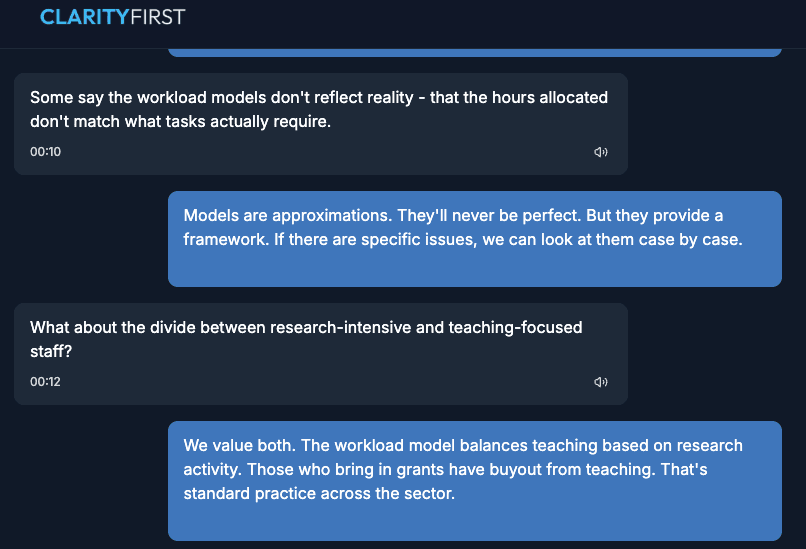

4. During the Interview

Understanding what happens during an interview helps you prepare participants and interpret the results.

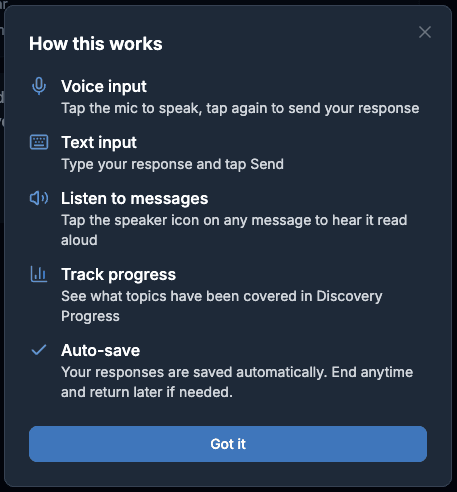

What Participants Experience

The interview is voice-first - participants speak naturally, and the AI responds conversationally. The experience is designed to feel like a structured conversation, not an interrogation.

- A brief introduction explaining the process

- Open-ended questions that invite elaboration

- Follow-up questions based on what they've said

- Natural topic transitions

- A clear ending with thanks

How the AI Adapts

Socrates (our interviewer agent) doesn't just read from a script. It:

- Asks follow-up questions when a response suggests there's more to explore

- Skips topics that have already been covered naturally

- Adjusts language and pace based on the participant's style

- Tracks which topics have been covered to ensure completeness

What You See as Consultant

While interviews are in progress, you can monitor:

- Real-time transcription of the conversation

- Topic coverage indicators showing what's been discussed

- Session status (active, completed, abandoned)

- Key insights captured during the interview

For Teams Interviews

When using consultant-led Teams interviews, the workflow is slightly different:

- The participant schedules via the availability picker — no back-and-forth emails

- Your consultant conducts the interview via Microsoft Teams

- After the meeting, fetch the transcript with one click from the session page

- The same analysis pipeline processes the transcript — Humboldt, Bayes, Darwin and the rest

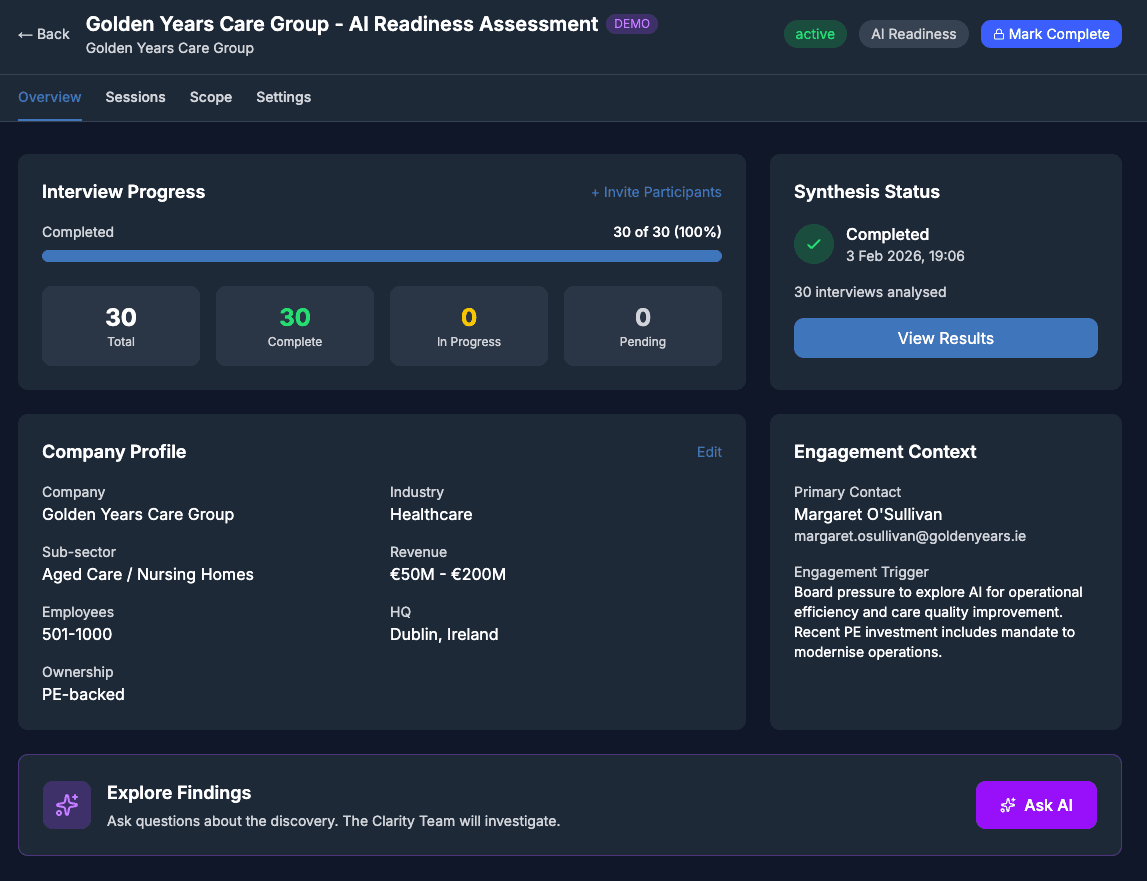

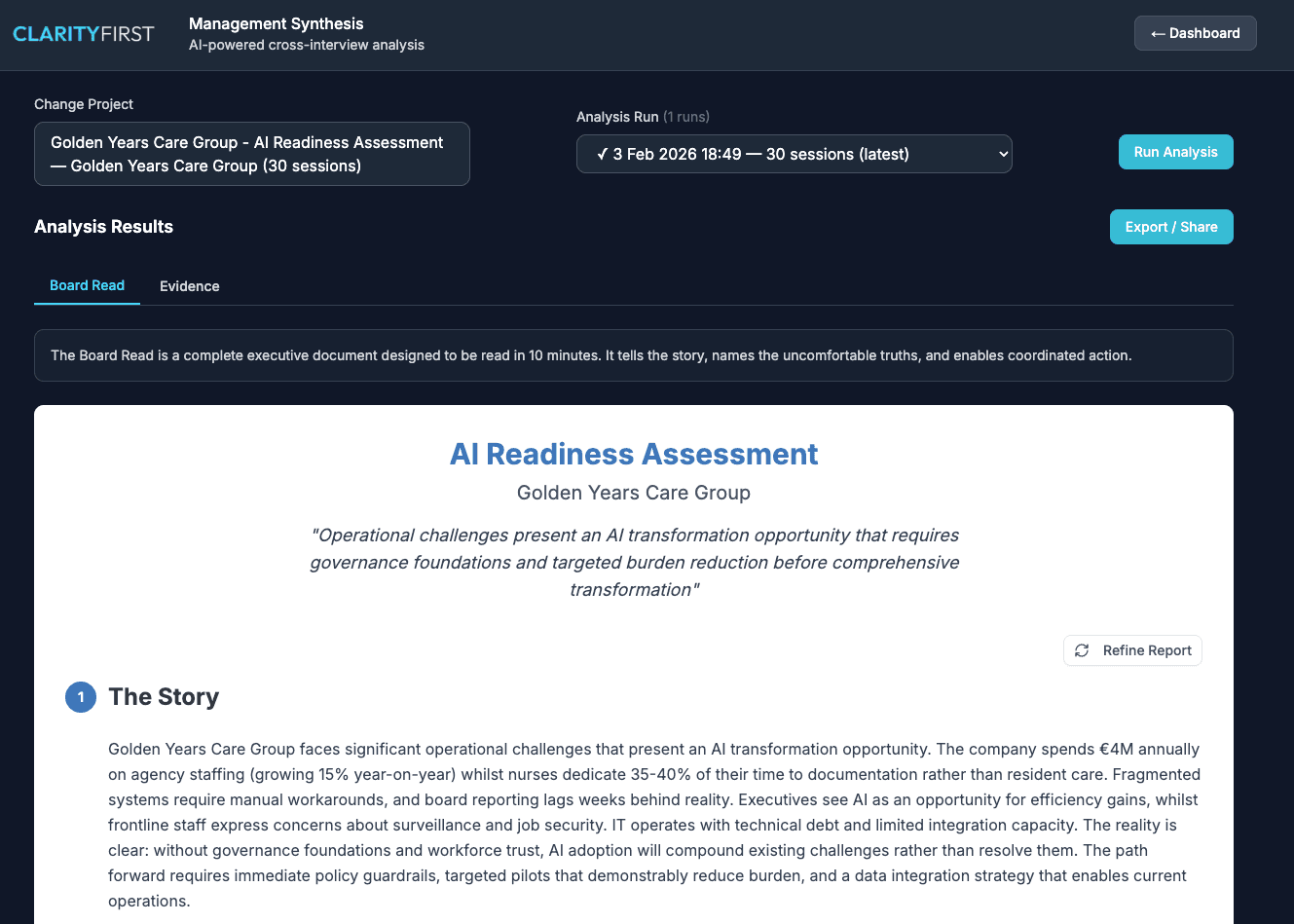

5. Working with the Synthesis

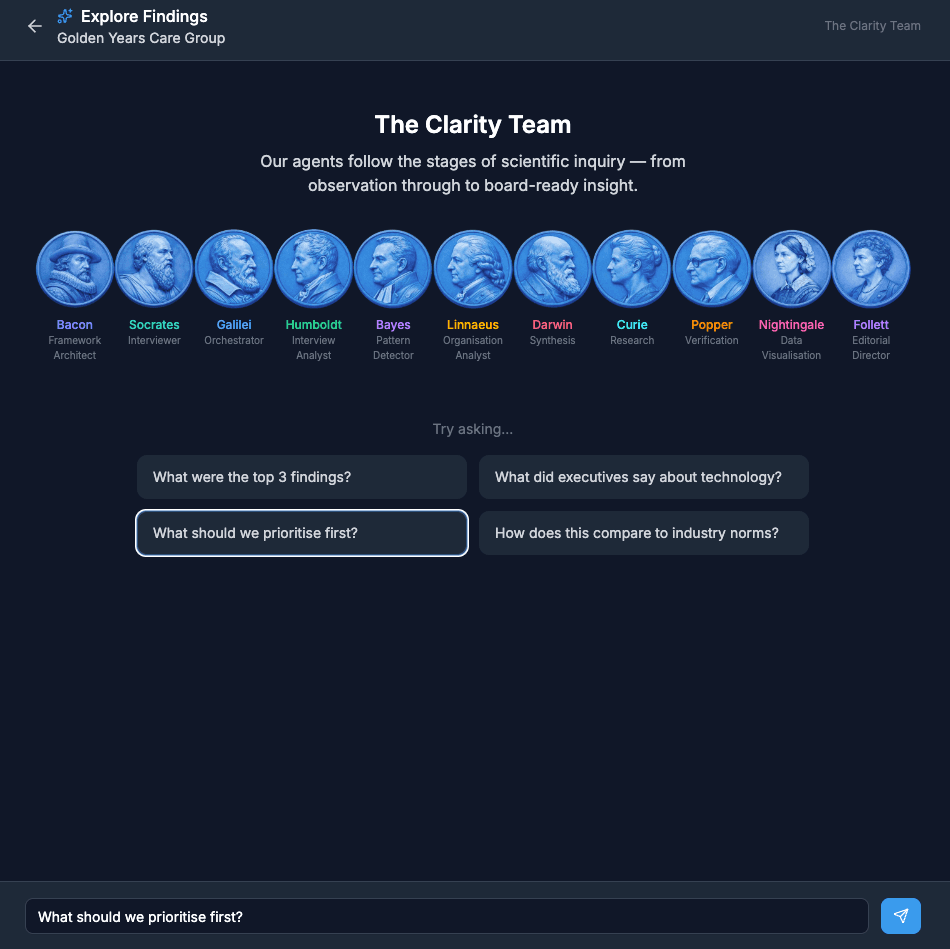

The synthesis is where Clarity First delivers its core value. Eleven AI agents work together to extract, analyse, and synthesise findings from your interviews. But the AI is a tool, not the final word - you stay in control.

Understanding the Agents

Each agent brings a different analytical perspective:

Galilei

Orchestrates the analysis pipeline, routing questions to the right expert during the Ask AI explore phase.

Bacon

Creates the synthesis brief from your project objectives - the roadmap for analysis.

Socrates

Conducts the AI interviews using the Socratic method - adapting questions based on responses.

Humboldt

Extracts key quotes, themes, and observations from each interview transcript.

Bayes

Identifies patterns across interviews - what's consistent, what's contradictory.

Linnaeus

Analyses organisational dynamics - structure, culture, relationships.

Darwin

Synthesises everything into findings, recommendations, and executive summary.

Curie

Adds external research and industry context to support findings.

Popper

Verifies findings against the evidence - the quality control step.

Nightingale

Transforms analysis into compelling visualisations - energy maps, tension maps, and decision trees.

Follett

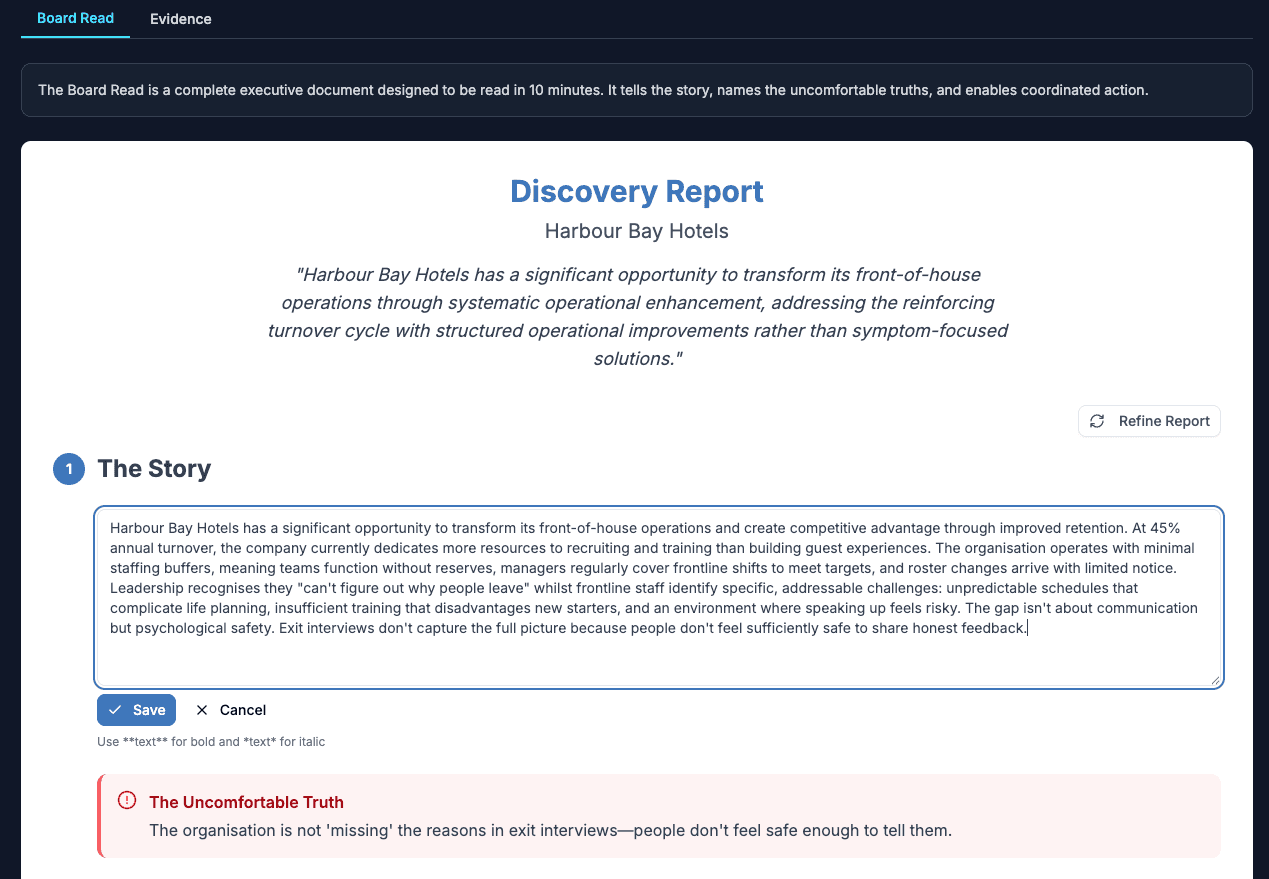

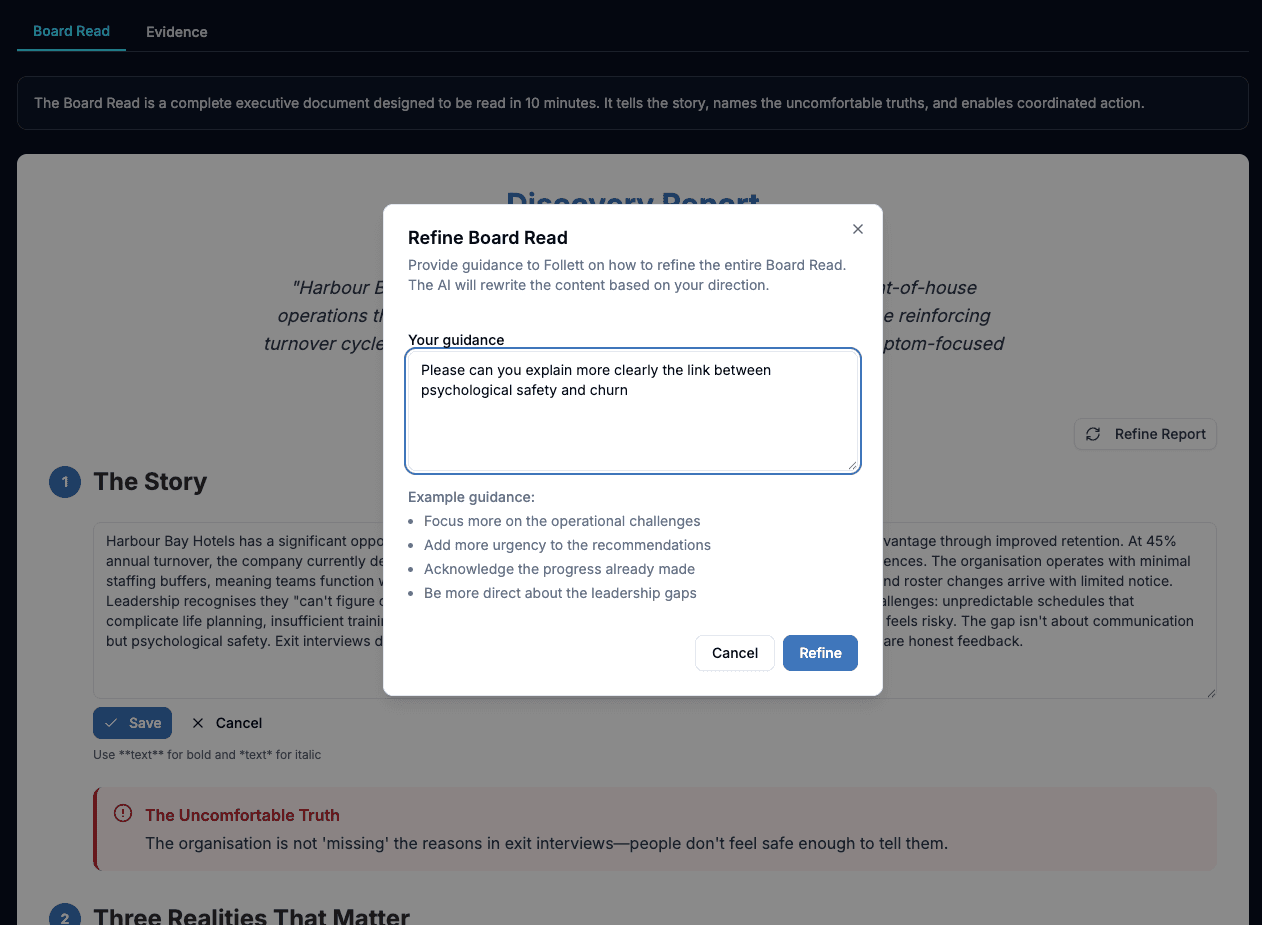

Compresses insights into a board-ready narrative - the executive deliverable.

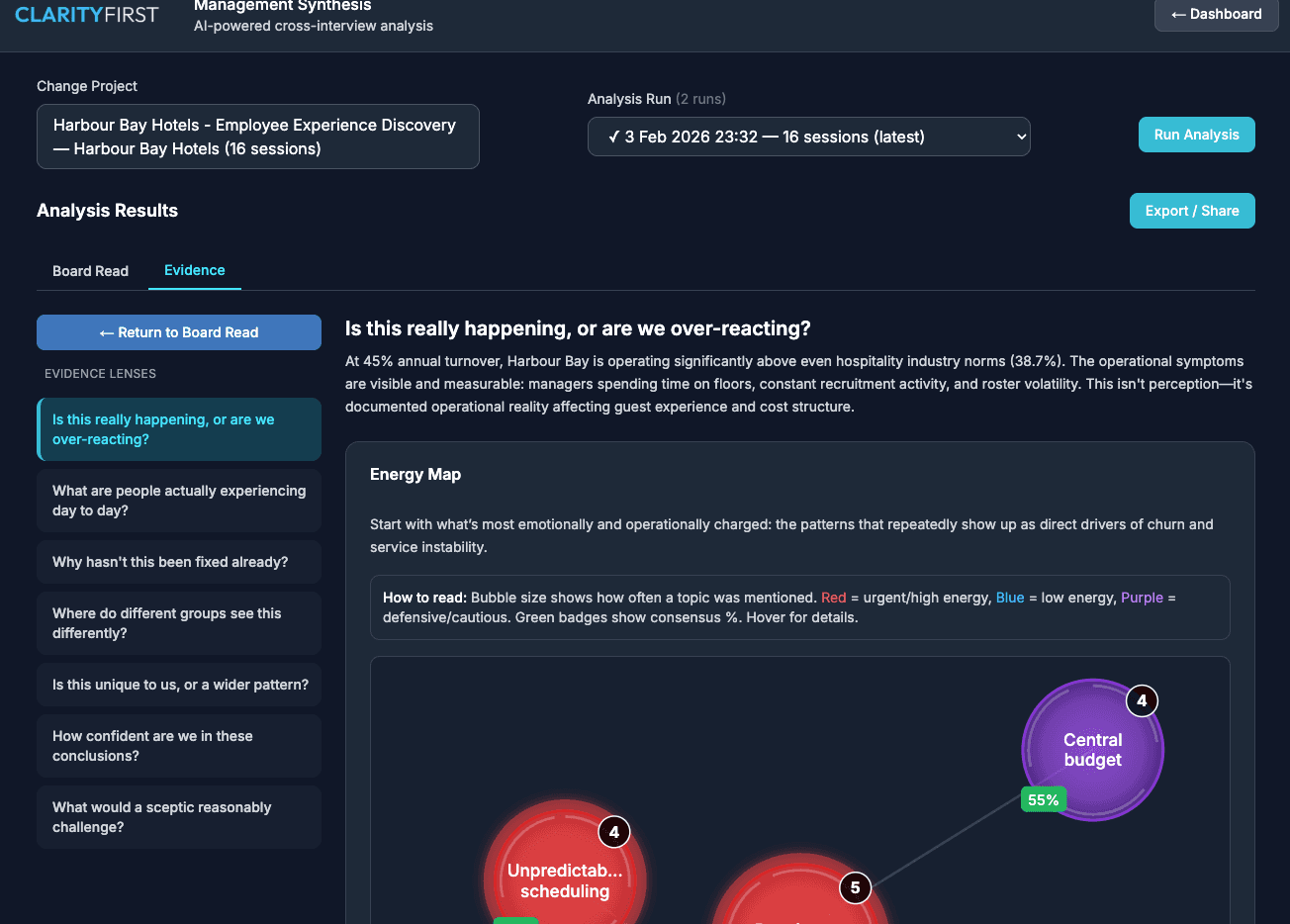

Evidence Lenses

The Evidence tab provides multiple lenses to explore your findings. Each lens answers a different executive question:

- Is this really happening? - Validate findings against operational reality

- What are people experiencing? - Day-to-day impact on staff

- Why hasn't this been fixed? - Systemic blockers and root causes

- Where do groups see this differently? - Perception gaps between levels

- How confident are we? - Evidence strength and coverage

Nightingale's visualisations - Energy Maps, Tension Maps, and Decision Trees - make patterns immediately visible, helping executives grasp complex dynamics at a glance.

You Stay in Control

The synthesis output is a starting point, not a final deliverable. You can:

- Edit findings: Adjust wording, change severity, add nuance

- Refine the executive summary: Send it back with specific guidance ("focus more on data governance", "tone down the technology recommendations")

- Review evidence: Click through to the original quotes supporting each finding

- Add your own insights: Combine AI analysis with your consultant expertise

From Analysis to Insight

The AI does the heavy lifting. This frees you up for the higher-value work:

- Strategic interpretation - what do these findings mean for this client?

- Prioritisation - what should they tackle first?

- Recommendations - specific, actionable next steps

- Client conversations - discussing findings, answering questions, building relationships

Instead of spending days coding transcripts, you spend hours refining insights and preparing recommendations. The deliverable is better, and it's ready faster.

6. Presenting to Stakeholders

Great analysis is wasted if it's not communicated well. Clarity First provides multiple ways to share findings with stakeholders.

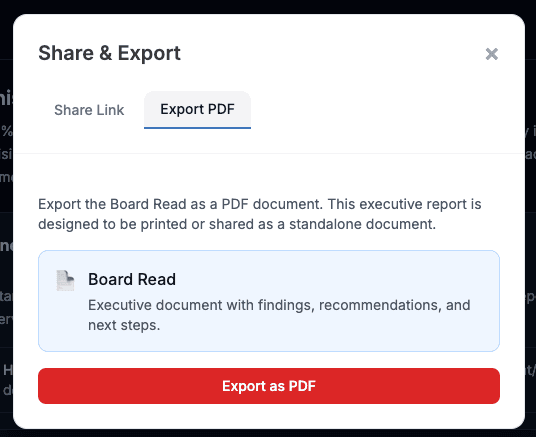

Export Options

Choose the format that fits your client's needs:

- PDF Report: Comprehensive written report with executive summary, findings, evidence, and recommendations. Best for formal deliverables and documentation.

- PowerPoint: Presentation-ready slides with key findings and visuals. Best for steering committees and workshop sessions.

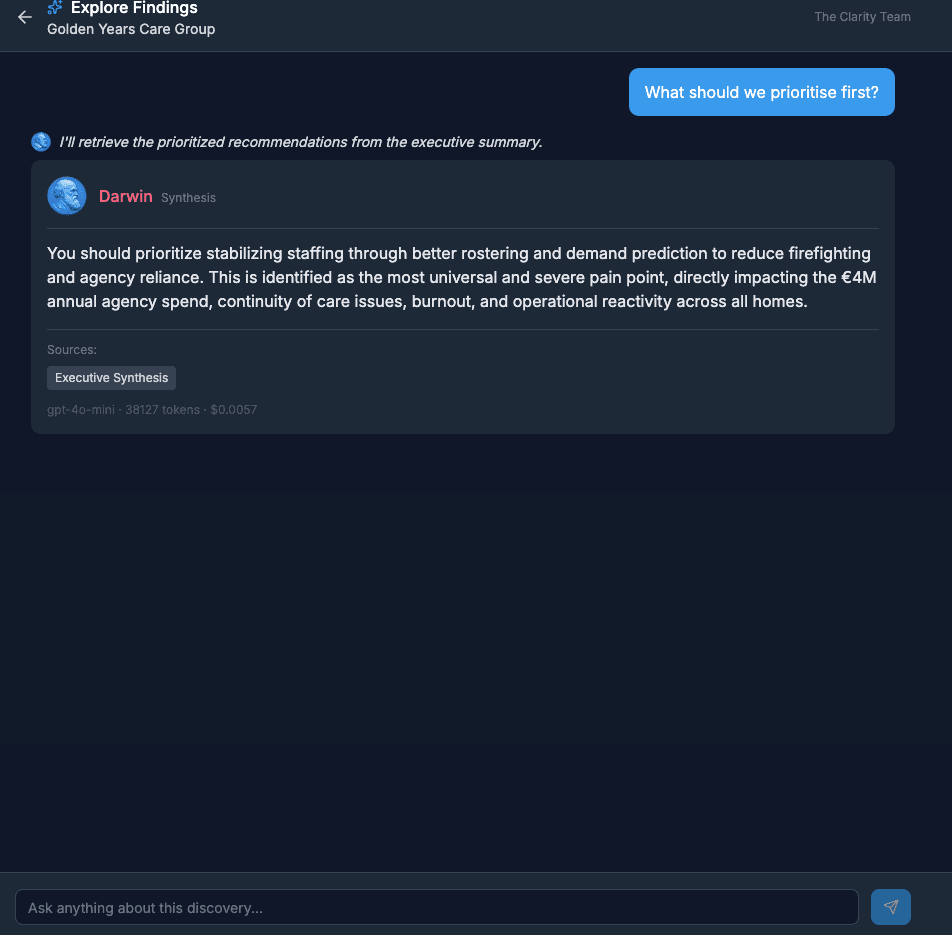

The Query Portal (Ask AI)

After synthesis, you can give stakeholders access to the Query Portal. This lets them ask questions about the findings directly - and Galilei orchestrates the response, routing queries to the right specialist agents and synthesising evidence-backed answers.

- "What did people say about the CRM system?"

- "How do operations and sales differ in their views on data quality?"

- "What were the main concerns about the proposed timeline?"

This extends the value of your discovery work - stakeholders can explore specific topics without scheduling follow-up meetings with you.

Handling Questions

When presenting findings, stakeholders will have questions. Clarity First helps you answer with confidence:

- Every finding links to supporting evidence (direct quotes)

- You can show how many people raised a particular issue

- Contradictory views are captured, not smoothed over

- The Query Portal can answer ad-hoc questions in real time

7. Common Pitfalls

Learn from common mistakes that reduce the value of discovery projects.

Vague objectives → Unfocused interviews

If you don't know what you're looking for, the AI can't help you find it. Generic objectives like "understand the current state" produce generic findings.

Fix: Write objectives as specific questions. What decisions will this discovery inform?

Not briefing participants → Anxiety and short answers

Participants who don't know what to expect give guarded, minimal responses. They're worried about saying the wrong thing.

Fix: Send clear invitations. Explain the process, topics, and confidentiality before the interview.

Skipping the refinement step → Generic outputs

The AI synthesis is a starting point. If you export it unchanged, you're delivering AI output, not consultant insight.

Fix: Always review, edit, and refine. Add your judgment. Shape the narrative for this specific client.

Treating synthesis as final → Missed opportunities

The synthesis answers the questions you asked. But discovery often reveals unexpected themes. Don't ignore findings that weren't in your original scope.

Fix: Review all findings, not just those mapped to your objectives. Some of the best insights are surprises.

Too few interviews → Weak patterns

Pattern detection needs volume. With only 3-4 interviews, the AI can extract themes but can't reliably identify what's consistent vs. individual opinion.

Fix: Aim for 8-12 interviews minimum for robust pattern analysis. More is better for organisation-wide discoveries.

8. Frequently Asked Questions

How long does a discovery interview take?

A typical AI-assisted discovery interview takes 20-30 minutes. The AI adapts to the participant, asking follow-up questions based on their responses. Some interviews may be shorter if the participant is concise, or longer if they have rich insights to share. Consultant-led Teams interviews typically run 30-45 minutes, as the natural conversation flow allows for deeper exploration.

What if a participant has accessibility needs?

Clarity First supports multiple approaches. Participants can use text instead of voice for AI interviews. For participants who prefer a human conversation, consultant-led Teams interviews are a built-in alternative. Pro and Expert customers can also import transcripts from interviews conducted elsewhere. The same AI analysis is applied regardless of how the interview was conducted.

Can participants see the questions before the interview?

Participants receive an invitation explaining the topics that will be covered, but not the specific questions. This is intentional - it encourages natural, spontaneous responses rather than rehearsed answers. The AI adapts its questions based on what the participant shares.

How is interview data protected?

All interview data is encrypted in transit and at rest. AI processing runs via Microsoft Azure OpenAI in Sweden Central (EU). Data is stored in Frankfurt (EU) and is never used to train AI models. You retain full ownership of your data, and it can be deleted at any time. See our Privacy Policy and DPA for full details.

What if the AI misunderstands something in an interview?

The synthesis stage includes verification by our Popper agent, which cross-checks findings against the original evidence. You can also review all extracted quotes and edit findings before finalising. The AI provides the analysis; you stay in control of the output.

Do Teams interviews use credits?

No. Only AI-conducted interviews consume credits. Consultant-led Teams interviews and imported transcripts are included free with any active subscription or credit pack.

How does the hybrid approach work?

Clarity First supports three interview modes in the same project: AI interviews via Socrates, consultant-led interviews via Microsoft Teams, and transcript import for interviews conducted elsewhere. All three are analysed using the same framework and AI agents, ensuring consistency. Use AI for volume, Teams for VIPs and sensitive topics, and import for existing material.

Ready to try it?

Experience an AI discovery interview yourself with our free 10-minute demo.

Try Free Demo